— Interpreting the paper “Gaussian Process Regression for Modeling Computational and Experimental Mineral Processing Data”.

Why Use GPR?

As ores become increasingly difficult to process—characterized by lower grades, finer particle sizes, and more complex mineralogy—engineers must make decisions in systems that are high-dimensional, nonlinear, and often supported by limited data.

Traditional methods such as classical regression, neural networks, or tree-based models typically provide only point predictions, without indicating the level of confidence in those predictions. Moreover, these models often struggle to perform reliable interpolation when datasets are small.

Gaussian Process Regression (GPR) offers a different approach. As a probabilistic, non-parametric method, GPR not only provides the predicted mean, but also directly quantifies prediction uncertainty through the posterior variance.

This capability is particularly valuable for engineering decision-making and experimental design, where understanding uncertainty can be as important as the prediction itself.

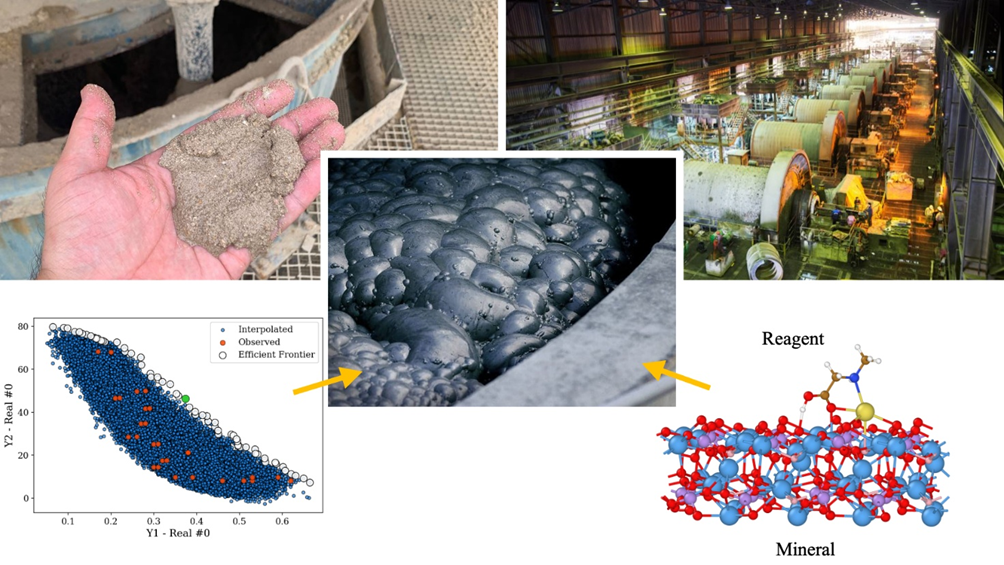

The paper illustrates the practical workflow and value of GPR through two concrete case studies:

·Density Functional Theory (DFT) calculations of molecular adsorption energy

·Laboratory flotation experimental data

These examples demonstrate how GPR can be applied to both computational modeling and experimental datasets in mineral processing.

Core Concepts Explained

1.Gaussian Process (GP):

A Gaussian Process treats an unknown function as a family of random functions. The function values at any set of input locations follow a joint multivariate normal distribution. Through a covariance function (kernel), the similarity between different input points can be described, enabling Bayesian inference for prediction.

2.Kriging:

A classical spatial interpolation method widely used in geostatistics, mathematically equivalent to GPR. It emphasizes first estimating the variogram (or semi-variogram) from observed data and then performing interpolation. The variogram-based GPR used in the paper follows this idea: the variogram is estimated directly from data and then converted into a covariance function used in the GPR model.

3.Variogram / Semi-variogram:

Describes how the difference between sample values (semi-variance γ(h)) changes as the distance h between points increases.

Common parameters include:

Nugget: the jump at extremely short distances, often representing measurement noise or micro-scale variation

Sill: the plateau where the variogram stabilizes

Range: the correlation length beyond which sample points are essentially uncorrelated The variogram form used in the paper resembles a Gaussian-type function.

4.Posterior Mean & Variance:

After incorporating observed data, GPR can provide for any new input point:

a predicted mean μ(x)

a predicted variance σ²(x)

The variance indicates where the model is most uncertain, which is essential for guiding future experiments (active learning/Bayesian optimization).

5.Conditional Realizations:

Based on observed data, multiple possible surfaces or functions can be sampled from the posterior multivariate normal distribution. These conditional realizations visually illustrate the range of possible outcomes beyond the observed samples, helping to understand risk and probabilistic behavior. The paper demonstrates this with several posterior realizations.

What the Paper Did

1. Computational Data Example (DFT, 22 Molecules)

Descriptors: dipole (μ), LUMO, HOMO–LUMO gap, and polarizability (α).

The target variable is the adsorption energy of molecules on a mineral surface (kJ/mol).

Because DFT calculations are computationally expensive and the sample size is small (22 data points), the authors used the following workflow:

variogram estimation → covariance construction → GPR interpolation and uncertainty evaluation in 2D, 3D, and 4D spaces.

They also used posterior realizations and probability maps (for example, P(adsorption < threshold)) to identify molecular regions that are worth further computation or experimental investigation.

The paper found that after adding the fourth variable, polarizability (α), the nugget dropped to zero, indicating that the additional descriptor was able to explain the previously unresolved variability.

2. Experimental Data Example (Flotation, 104 Tests)

There are five input variables—particle size, shape, Reynolds number (Re), micro-turbulence, and surface area flux—and two response variables: the rate constant k and the collision efficiency Ec.

In the sample set, y1 and y2 show a negative correlation.

Because the data were insufficient to directly estimate the cross-variogram required for co-kriging, the paper first applied PCA (Principal Component Analysis) to y1 and y2.

GPR was then performed separately in the PC1/PC2 space, where the principal components are uncorrelated, and the predictions were subsequently transformed back into the original y-space.

This approach makes it possible to obtain a smooth ŷ1–ŷ2 surface and extract the Pareto frontier / efficient frontier. The frontier points can then be mapped back to the input variables, yielding specific process parameter recommendations, with the paper providing example value ranges.

The paper also used an irregular, data-adaptive grid—generated by perturbation sampling around observed points (m = 50,000)—to avoid the computational breakdown that would result from constructing a dense regular grid in 5D space.

Why Use Variogram-Based GPR

Instead of Directly Choosing an RBF Kernel??

In standard GPR workflows, practitioners typically select a fixed kernel (such as RBF or Matérn) and adjust the hyperparameters through maximum likelihood estimation.

However, in geological and mineral processing applications, the spatial correlation structure embedded in the data itself is often critical.

The variogram-based approach first estimates the semi-variogram γ(h) directly from the data, and then converts it into a covariance function C(h) used in the GPR model. In this way, the kernel function reflects the actual correlation patterns observed in the data, rather than relying on a predefined functional form.

The paper emphasizes that when data are scarce or unevenly distributed, this approach is often more robust than blindly applying standard kernels.

Practical Implementation Workflow

Data preprocessing

Normalize or standardize numerical variables and handle missing values.

Dimensional transformation for correlated outputs (if applicable)

If multiple output variables are correlated, apply PCA to project them into uncorrelated principal components.

Estimate the empirical variogram

Group sample pairs by distance bins and compute the semi-variance γ(h).

Fit the parameters—nugget, sill, and range—using nonlinear regression.

Convert the variogram into a covariance function

Derive the covariance as

C(h) = σ²_total − γ(h)

(ensuring C(0) = σ²_total).

Construct the training covariance matrix K, including nugget or noise terms.

Perform GPR (or Kriging)

Compute the posterior mean and variance using Cholesky decomposition for numerical stability.

If sampling is required, draw conditional realizations from the posterior covariance.

Post-processing and decision analysis

If PCA was applied, transform predictions back to the original response variables.

Then compute the Pareto frontier and map the frontier points back to the corresponding ranges of input variables.

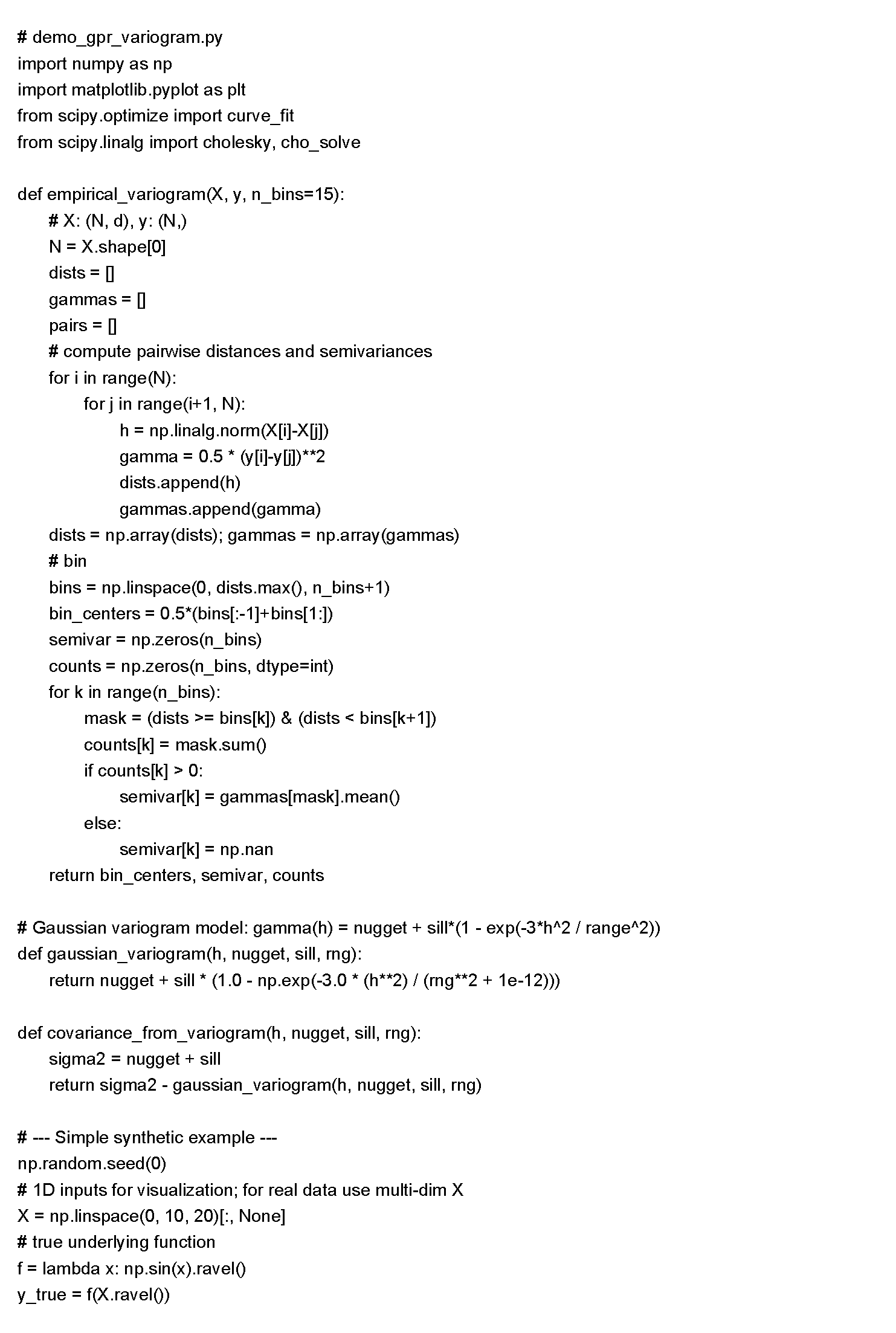

Demonstration Code:

The code below demonstrates how to estimate the empirical variogram from data, fit a Gaussian-type variogram, and then use the resulting covariance matrix to compute the posterior mean and variance in GPR.

This implementation does not rely on the sklearn kernel interface. Instead, it uses direct linear algebra, making the workflow easier to understand and more faithful to the procedure described in the paper.

Please run it in an environment with NumPy, SciPy, and Matplotlib installed:

Code Notes

1. We first use empirical_variogram to estimate the semi-variance, and then fit a Gaussian-type variogram with the parameters nugget, sill, and range.

2. We then use covariance_from_variogram to convert the variogram into a covariance function: C(h) = σ²_total − γ(h).

3. The training covariance matrix K is constructed directly, and the posterior mean and variance are obtained by solving the linear system via Cholesky decomposition, consistent with the workflow described in the paper.

4. For multi-dimensional inputs and multi-output problems—such as the 4D DFT case or the 5D flotation case in the paper—the procedure remains the same. The only difference is that multi-dimensional Euclidean distance is used when constructing distances. For multiple correlated outputs, it is recommended to apply PCA first, or use co-kriging if sufficient data are available.

Practical Recommendations

1. When data are scarce, it is generally preferable to use GPR, which provides uncertainty estimates, rather than a black-box point-prediction model. Uncertainty can then be used to guide subsequent DFT calculations or laboratory experiments.

2. If there are multiple correlated outputs:

use co-kriging when sufficient data are available;

otherwise, apply PCA to decompose the outputs, perform GPR separately, and then transform the predictions back to the original space. This is the approach adopted in the paper.

3. Avoid using regular grids in high-dimensional space. Instead, adopt data-adaptive sampling—for example, perturbation sampling around observed points—or use a query set based on importance sampling. This reduces computational cost while preserving resolution in the regions of interest. The paper uses an irregular grid with m = 50,000.

4. As the sample size grows and N becomes large, the O(N³) computational complexity of standard GPR becomes a bottleneck. In such cases, consider using sparse Gaussian Processes, inducing points, or random feature approximation methods.